Crowd Computing & Human-Centered AI

- Human-in-the-loop AI

- Human-AI interaction

- User Modeling and Explainability

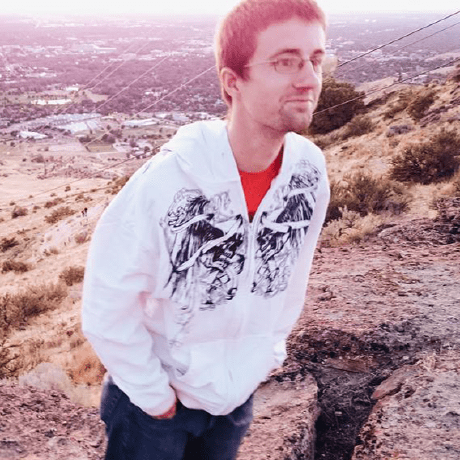

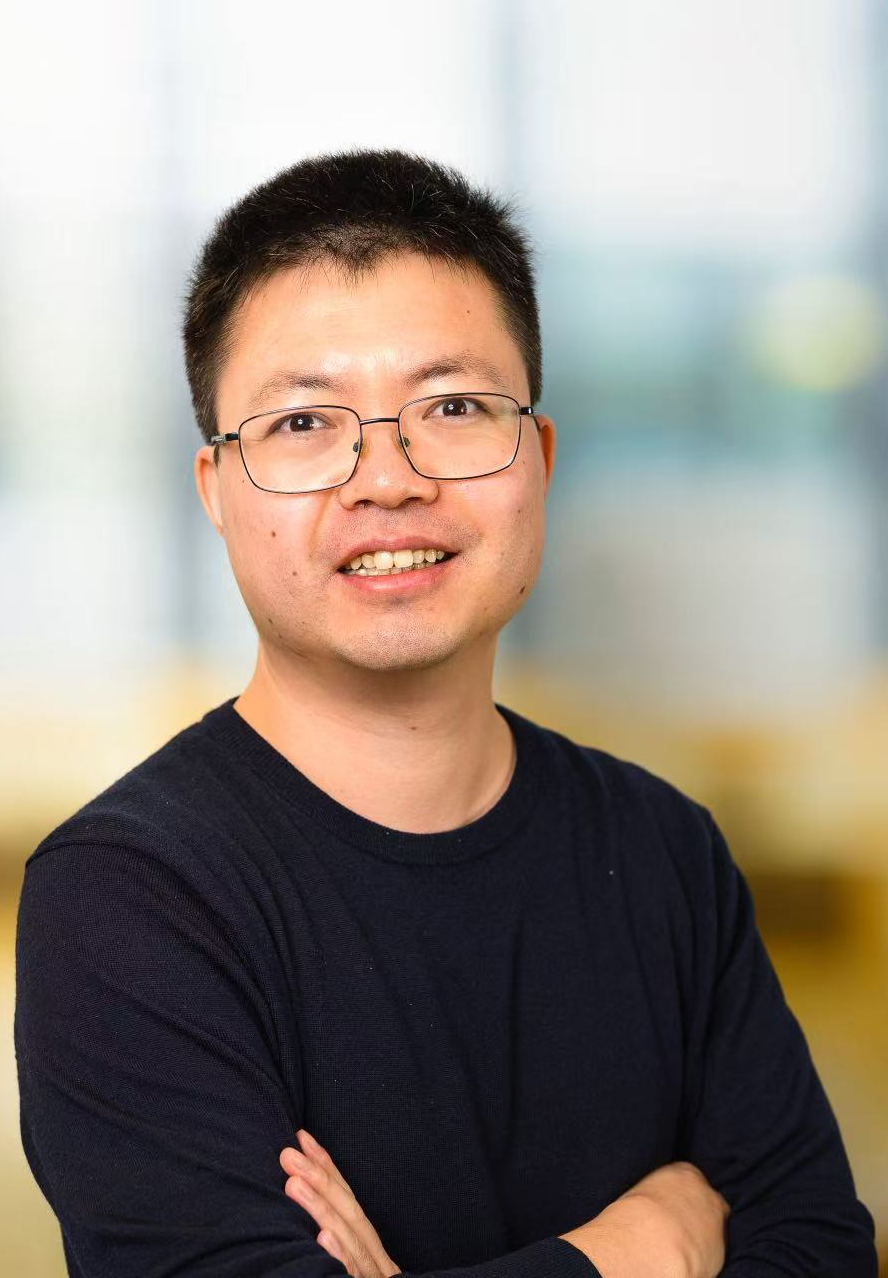

This research theme is a convergence of two research lines – "Epsilon" and "Kappa". The Human-in-the-loop AI and Human-AI interaction activities are jointly coordinated and led by Ujwal Gadiraju and Jie Yang. The User Modeling and Explainability activities are coordinated and led by Nava Tintarev.

Human-in-the-loop AI

Machine learning models have been criticized for the lack of robustness, fairness, and transparency. For models to learn comprehensive, fine-grained, and unbiased patterns, they have to be trained on a large number of high-quality data instances with the right distribution that is representative of real application scenarios. Creating such data is not only a long, laborious, and expensive process, but sometimes even impossible. In this theme, we analyze the fundamental computational challenges in the quest for robust, interpretable, and trustworthy AI systems. We argue that to tackle such fundamental challenges, research should explore a novel crowd computing paradigm where diverse and distributed crowds can contribute knowledge at the conceptual level.

Human-AI Interaction

In the light of recent advances in AI and the growing role of AI technologies in human-centered applications, a deeper exploration of interaction between humans and machines is the need of the hour. Within this theme of Human-AI interaction, we will explore and develop fundamental methods and techniques to harness the virtues of AI in a manner that is beneficial and useful to the society at large. From the interaction perspective, more robust and interpretable systems can help build trust and increase system uptake. As AI systems become more commonplace, people must be able to make sense of their encounters and interpret their interactions with such systems.

User Modeling & Explainability

Explanations are needed when there is a large knowledge gap between human and AI or information systems, or when joint understanding is only implicit. This type of joint understanding is becoming increasingly important for example when news providers, and social media systems such as Twitter and Facebook, filter and rank the information that people see. To link the mental models of both systems and people our work develops ways to supply users with a level of transparency and control that is meaningful and useful to them. We develop methods for generating and interpreting rich meta-data that helps bridge the gap between computational and human reasoning (e.g., for understanding subjective concepts such as diversity and credibility). We also develop a theoretical framework for generating better explanations (as both text and interactive explanation interfaces), which adapts to a user and their context. To better understand the conditions for explanation effectiveness, we look at when to explain (e.g., surprising content, lean in/lean out, risk, complexity); and what to adapt to (e.g., group dynamics, personal characteristics of a user).

People

Projects

-

ENSURE – ExplaiNing SeqUences in REcommendations.

PhD studentship funded via TU Delft Technology Fellowship.

-

NL4XAI – Interactive Natural Language Technology for Explainable Artificial Intelligence.

A project funded by the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie Grant Agreement No. 860621.

-

Representing diverse views for polarized topics online.

PhD studentship co-funded by IBM Benelux and CLICK_NL.

-

Dandelion – A Conversational Crowd Computing Platform

Conversational agent Dandelion is a feedback tool for students. With most of our teaching activities taking place online, we miss out on normal interaction with our students and thus also pick up fewer signals about their well-being. How are they actually doing in this tough situation; how are they really feeling? This requires extra attention.

-

Campus Trainbot – Minting Stress Management Coaches During a Pandemic

Trainbot is a conversational interface that trains non-experts the technique of Motivational Interviewing, a counselling approach that is used to treat anxiety, depression, and other mental problems.

-

UNCAGE – Conversational Agents for Education

Within this 4TU.CEE project, we will develop and evaluate conversational agents to address important challenges in the realm of education.

Publications

- List of publications

.jpg)